Translates as "Tinfoil hats: don them"

Well, they might be if a team of Japanese scientists’ claims are to be believed (and exagerated (rather a lot)). They’ve managed to reconstruct images a person was looking at, by analysing their brain activity. Spook!

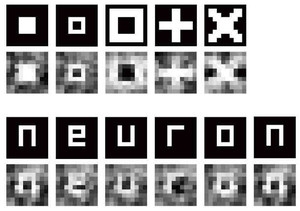

To do this, they hooked up a functional magnetic resonance imaging machine to some people’s heads, showed them a set of 10 x 10 black and white image grids as control data, and recorded the blood-oxygen levels of parts of the visual cortex while they looked on. From this they mapped which specific brain activity patterns corresponded to which image grid patterns, so creating a mapping from brain activity -> image being viewed. To test it out, the next stage was to show the subjects different 10 x 10 image grids (of recognisable things like letters), pass the recorded brain activity through their mapping function and reconstruct the original images. And if you believe the image over there, it worked!

Scary much?

Well, for one (minor niggly) thing, the released image (sourced directly from the Japanese website linked below) contains hugely similar ‘reconstructed’ image for both the first and last ‘n’ letter (accounting for jpeg encoding). If they’d been recorded by separate viewings of the image, I’d expect more differences.

Well, for one (minor niggly) thing, the released image (sourced directly from the Japanese website linked below) contains hugely similar ‘reconstructed’ image for both the first and last ‘n’ letter (accounting for jpeg encoding). If they’d been recorded by separate viewings of the image, I’d expect more differences.

For another (major) thing, it’s one thing to analyse the visual cortex, with a hugely expensive machine right next to your head, for a very restrictive set of distinct visual data-keyed brain patterns, over a long time (reported 12 seconds per image), after a nice control data set to train it… and quite another to actually read and visualise thoughts (unless you’re a batshit insane conspiracy theorist, of course, in which case they’ve been doing it for years already!!!1) or even more general visual data.

Still, a nice step in the right direction, eh?

If you can read Japanese click this, if you can’t then click this, which has more detail than I’ve gone into.